For the first time in many years, I am teaching a College Prep Algebra 1 class with a fantastic group of 9th graders. Nearing the end of our linear functions unit, my colleagues and I discussed a desire to have some sort of culminating activity. And while I have used drawing projects often in some courses, in algebra 1 such tasks have often left me feeling unfulfilled. Too many horizontal and vertical lines for my liking I suppose.

I recalled reading about a potential golf-related task on twitter. To be honest, I don’t recall whose exact post provided the inspiration here (note – I am thinking it was Robert Kaplinsky or John Stevens, but I may be wrong. If anyone locates a source, I’ll edit this and provide ample credit), but it felt like a game-related task could provide by the strategy and fun elements which tend to be missed by drawing tasks.

HOW THE GOLF CHALLENGE WORKS:

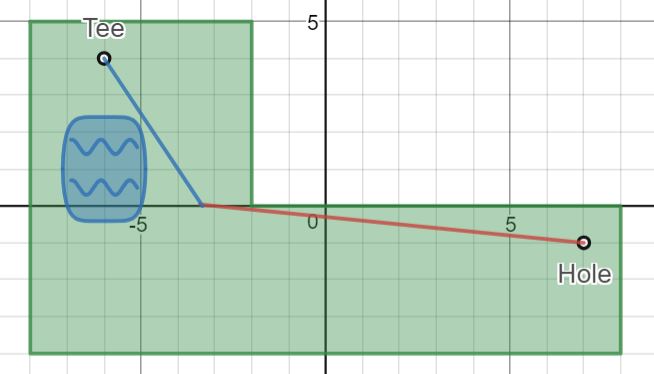

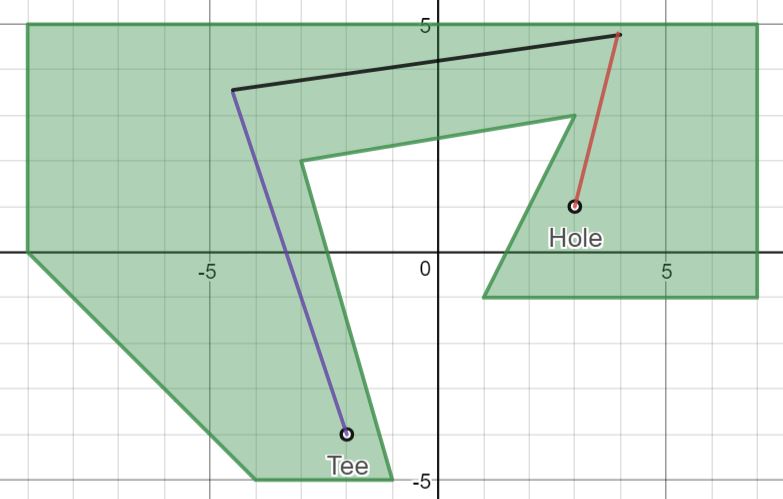

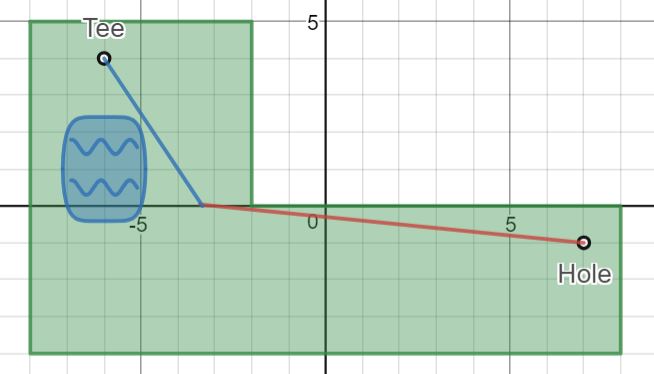

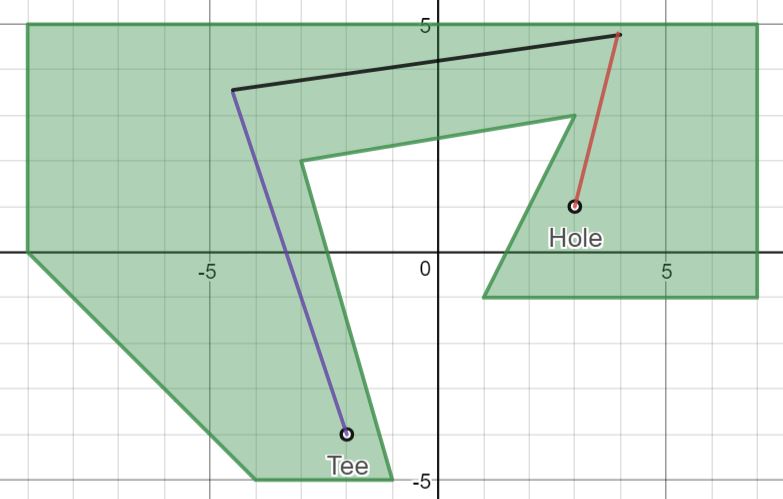

The goal – write equations of lines which connect the “tee” to the “hole”. Use domain and/or range restrictions to connect your “shots”. Try to reach each hole in a minimal number of shots. Leaving the course (the green area) or hitting “water” are forbidden. All vertical or horizontal likes incur a one-stroke penalty.

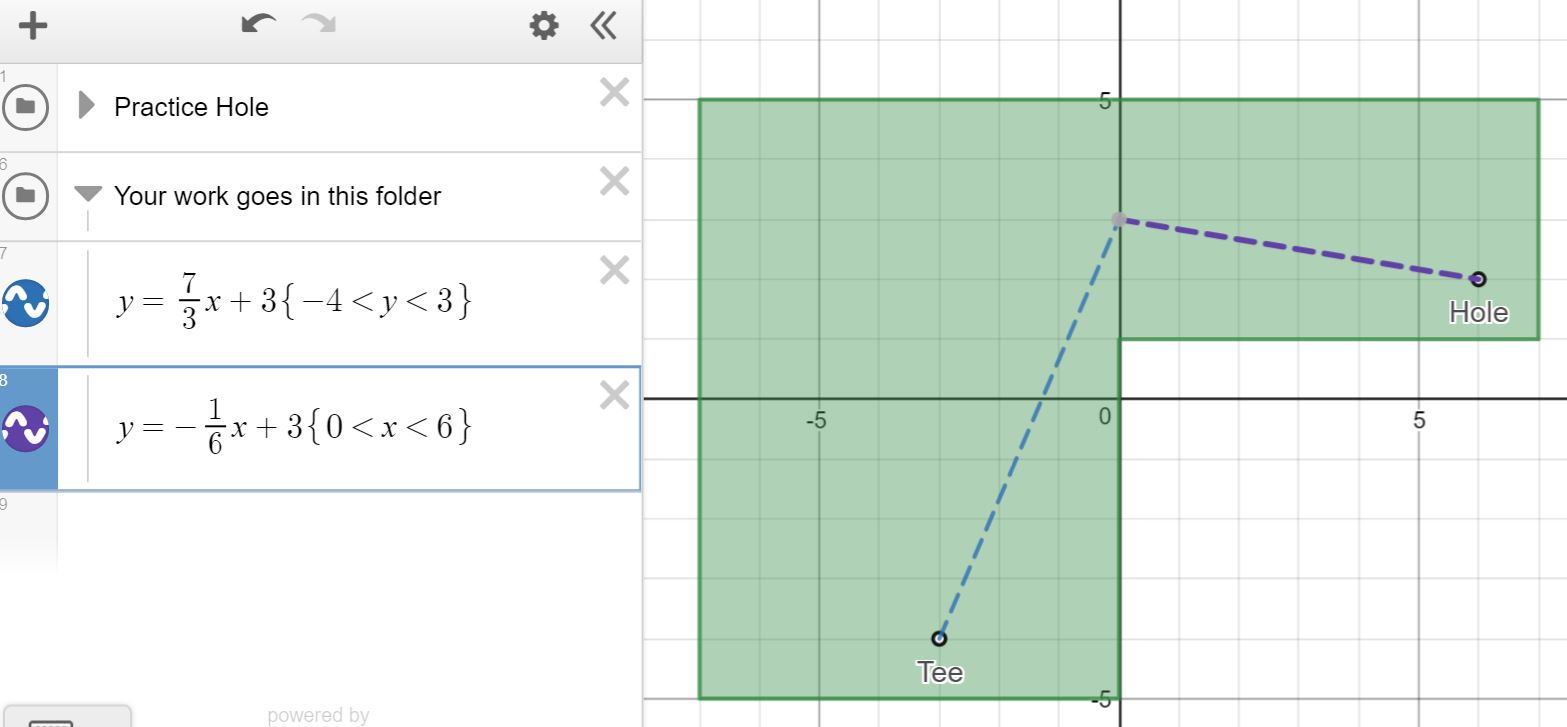

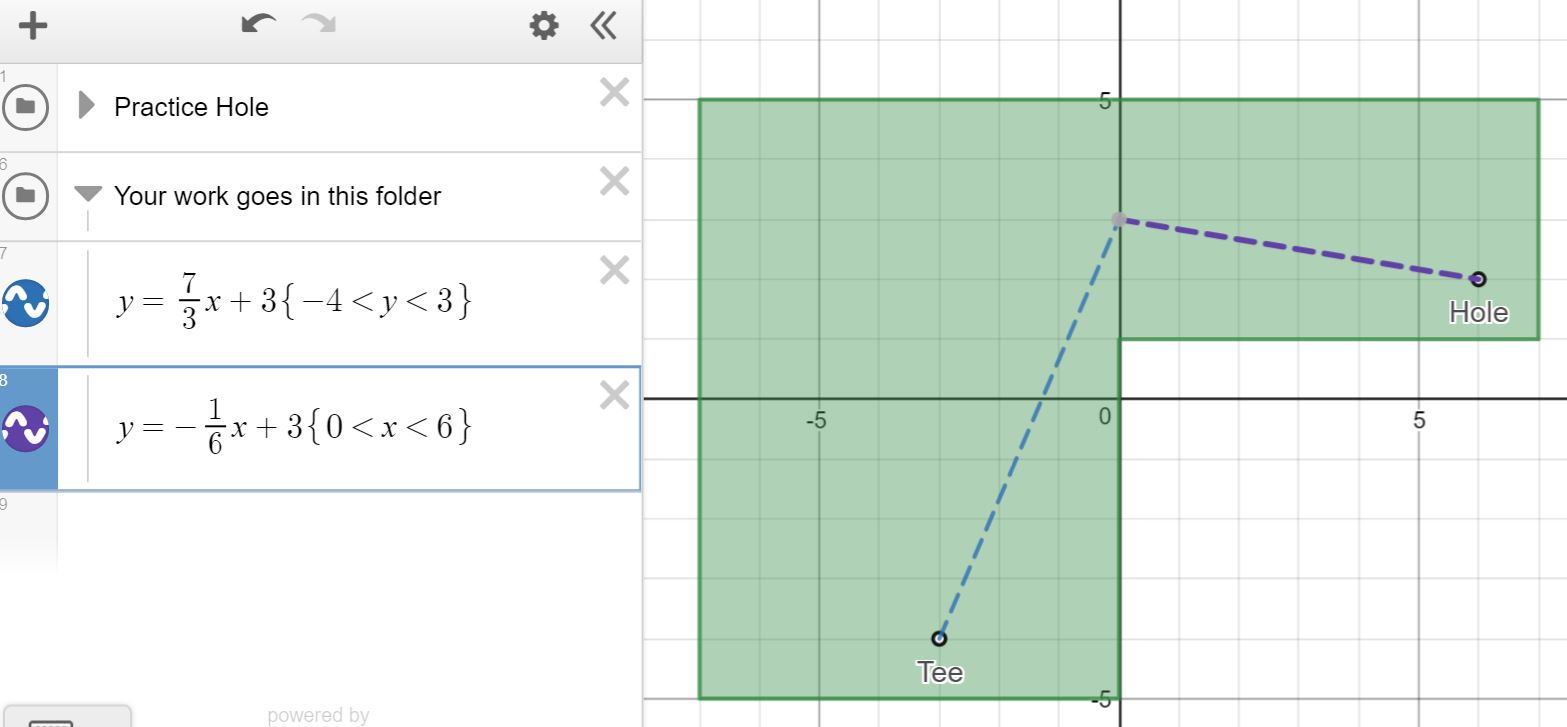

On the day before the task, the class worked through a practice hole. Besides understanding the math task, there are also a few Desmos items for students to understand:

- Syntax for domain / range restrictions

- Placing items into folders

- Turning folders on /off

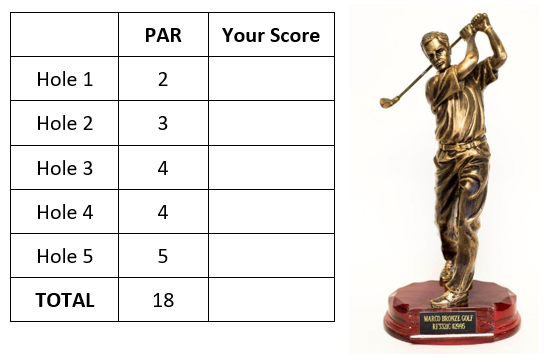

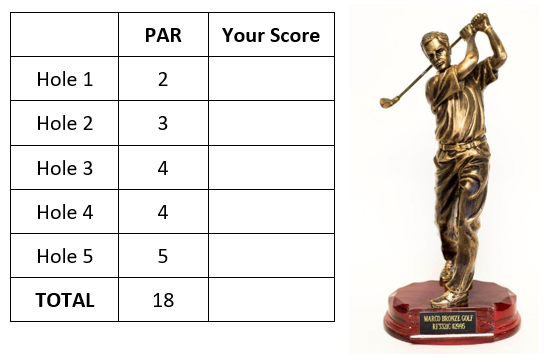

For the actual task, a shared a link to a Desmos file with 5 golf holes. I tried to build tasks which increased in their difficulty. In practice, the task took an entire class period (75 minutes), and students worked in pairs to discuss, plan, and complete the holes. All students then uploaded their graphs to Canvas for my review, and filled out a “scorecard” which included “par” for each hole. It became quite competitive and fun!

For the actual task, a shared a link to a Desmos file with 5 golf holes. I tried to build tasks which increased in their difficulty. In practice, the task took an entire class period (75 minutes), and students worked in pairs to discuss, plan, and complete the holes. All students then uploaded their graphs to Canvas for my review, and filled out a “scorecard” which included “par” for each hole. It became quite competitive and fun!

In the end, there is not too much I would change here. Perhaps add some more complex holes. I’d also like to provide opportunity for students to design and share their own golf holes, and study the “engine” which built mine. I hope your class has fun with it! Please share your suggestions, questions and adaptations.

For the actual task, a shared a link to a

For the actual task, a shared a link to a