The Common Core Standards provide an exciting opportunity for statistics education, with inference concepts starting informally in middle school and sampling distributions with inference moving into the high school mainstream. Under the “Probability and Statistics” strand, we find the following:

Make inferences and justify conclusions from sample surveys, experiments, and observational studies

CCSS.MATH.CONTENT.HSS.IC.B.4

Use data from a sample survey to estimate a population mean or proportion; develop a margin of error through the use of simulation models for random sampling.

But many of our high school teacher colleagues will need supports to help their students think statistically. AP Stats teachers, often the loneliest folks in their departments, will now need to share their expertise with non-stats inclined colleagues. Below are snapshots from a lesson on sampling and margin of error I used with my 9th grade classes, with many ideas adapted from my AP Stats teaching experiences.

STARTING WITH SAMPLING DISTRIBUTIONS

Student in this class had already been exposed to a probability unit, which included conditional probabilities, binomial settings and the normal distribution. After a discussion of sampling techniques, I wanted to conceptualize margin of error before diving into formulas. Here’s what I did:

- Class is broken in two large groups

- Each side of the room was given the task of flippling virtual coins, using a graphing calculator

- For one side of the room, 25 coins were flipped. For the other side, 100 coins were flipped.

- “Coins” were sorted and counted, and the proportion of heads communicated to team captain, who recorded the result on a group graph.

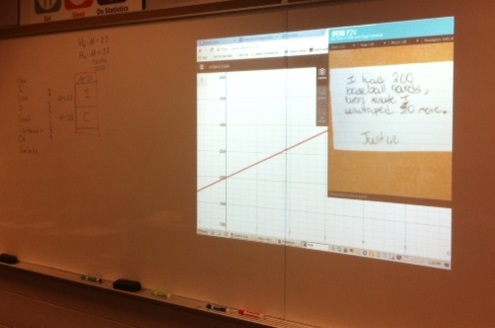

The results for the two groups are shown here.

The class discussion the moves to a comparison of the graphs. How are they similar? How are they different? Clearly, both graphs center about the true proportion (.5). The shapes also seem similar. But the vaiability seems to be different. How likely is it to have 60% of coins show heads, if 25 coins are flipped? Does this likelihood change with 100 coins? We’re moving towards sampling distributions and inference topics, without all the scary-sounding language.

LINKING SAMPLING TO PUBLIC-OPINION POLLS

In the next part of the lesson, I wanted students to explore public-opinion polls, choose a topic of interest to them, and think about the associated language and structure. The site pollingreport.com is ideal for this. The site collects and summarizes poll results from many sources, categorized by topic.

Student pairs were asked to find one poll of interest to them, record the source and the number of people surveyed, and record it on the back board. We then looked for common threads:

- Sources: we found many repeats in the sources chosen (Rasmussen, Gallup, Washington Post, FoxNews) and discussed reliable sources of information.

- Sample sizes: most surveys had about 1,000 participants, with one poll using 2,000.

Can 1,000 people possible allow us to represent a population? How reliable can we expect a poll to be if just 1,000 people are surveyed? Time to move on to the last stage of the lesson – simulation.

SAMPLING WITH STATKEY

The great free site StatKey allows us to look at sampling distributions easily and discuss our observations. In my class, pairs had a netbook for exploring the site. We started with the poll about college football above, where 47% of those surveyed registered “support”. If 47% is the true proportion, and we survey 1000 people, how close to 47% should we expect to see in our survey? Is 46% plausible? How about 44%, or even as low as 40%? We can set the population parameter, and the sample size, then it’s time to draw some samples:

Most of the possible samples center about 47%, but here’s the follow-up for class:

A large majority of the possible samples seem to be within ___ % of 47%.

Certainly, we see that ALL of the surveys are within 10%, but can we narrow that window? After some dicsussion, the class agreed that 3% was a reasonable window for capturing a large portion of the surveys. How many of the samples here are within 3% of the “true” proportion of 47%? StatKey can take of that work for us:

As the unit progressed, StatKey was always shining up on the board to verify our margin of error calculations, and provide a link to the sampling distribution ideas.

Wanting to stress contexts from day 1, I used the site

Wanting to stress contexts from day 1, I used the site